How can we reduce the carbon footprint of global computing? | MIT News

The voracious appetite for strength from the world’s desktops and communications technologies presents a clear danger for the globe’s warming climate. That was the blunt evaluation from presenters in the intensive two-working day Local weather Implications of Computing and Communications workshop held on March 3 and 4, hosted by MIT’s Climate and Sustainability Consortium (MCSC), MIT-IBM Watson AI Lab, and the Schwarzman University of Computing.

The virtual event showcased rich conversations and highlighted alternatives for collaboration amid an interdisciplinary group of MIT college and scientists and business leaders across many sectors — underscoring the electricity of academia and market coming alongside one another.

“If we proceed with the present trajectory of compute power, by 2040, we are intended to strike the world’s strength production capacity. The maximize in compute strength and demand has been increasing at a a great deal quicker charge than the earth energy generation ability improve,” explained Bilge Yildiz, the Breene M. Kerr Professor in the MIT departments of Nuclear Science and Engineering and Elements Science and Engineering, a single of the workshop’s 18 presenters. This computing electrical power projection attracts from the Semiconductor Investigation Corporations’s decadal report.

To cite just just one instance: Details and communications engineering by now account for far more than 2 {18fa003f91e59da06650ea58ab756635467abbb80a253ef708fe12b10efb8add} of world-wide power demand from customers, which is on a par with the aviation industries emissions from gasoline.

“We are the very commencing of this facts-pushed planet. We truly want to get started imagining about this and act now,” mentioned presenter Evgeni Gousev, senior director at Qualcomm.

Ground breaking power-performance alternatives

To that conclusion, the workshop displays explored a host of vitality-performance selections, which include specialized chip design and style, information centre architecture, much better algorithms, hardware modifications, and changes in client conduct. Sector leaders from AMD, Ericsson, Google, IBM, iRobot, NVIDIA, Qualcomm, Tertill, Texas Devices, and Verizon outlined their companies’ electricity-saving courses, even though authorities from throughout MIT presented insight into existing analysis that could yield far more effective computing.

Panel subjects ranged from “Custom hardware for effective computing” to “Hardware for new architectures” to “Algorithms for successful computing,” between other folks.

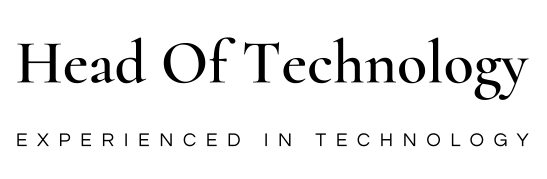

Visible representation of the dialogue through the workshop session entitled “Electricity Effective Programs.”

Graphic: Haley McDevitt

The objective, stated Yildiz, is to make improvements to vitality performance involved with computing by a lot more than a million-fold.

“I consider part of the solution of how we make computing a great deal extra sustainable has to do with specialized architectures that have very superior stage of utilization,” said Darío Gil, IBM senior vice president and director of study, who pressured that methods really should be as “elegant” as doable.

For example, Gil illustrated an modern chip style that utilizes vertical stacking to lower the distance knowledge has to journey, and therefore minimizes vitality consumption. Surprisingly, far more efficient use of tape — a common medium for principal info storage — mixed with specialized challenging drives (HDD), can generate a spectacular cost savings in carbon dioxide emissions.

Gil and presenters Bill Dally, chief scientist and senior vice president of investigate of NVIDIA Ahmad Bahai, CTO of Texas Devices and other individuals zeroed in on storage. Gil as opposed data to a floating iceberg in which we can have rapidly entry to the “hot data” of the scaled-down noticeable element though the “cold knowledge,” the large underwater mass, signifies facts that tolerates higher latency. Think about electronic photo storage, Gil said. “Honestly, are you genuinely retrieving all of those pictures on a constant basis?” Storage devices must offer an optimized mix of of HDD for incredibly hot data and tape for chilly details dependent on information entry patterns.

Bahai stressed the considerable electricity conserving obtained from segmenting standby and whole processing. “We want to find out how to do very little greater,” he said. Dally spoke of mimicking the way our brain wakes up from a deep snooze, “We can wake [computers] up substantially more quickly, so we do not require to hold them jogging in full pace.”

Quite a few workshop presenters spoke of a target on “sparsity,” a matrix in which most of the things are zero, as a way to enhance efficiency in neural networks. Or as Dally claimed, “Never set off until tomorrow, in which you could put off permanently,” outlining efficiency is not “getting the most facts with the fewest bits. It can be executing the most with the minimum strength.”

Holistic and multidisciplinary approaches

“We need to have both of those productive algorithms and economical components, and at times we need to co-design both the algorithm and the hardware for efficient computing,” explained Music Han, a panel moderator and assistant professor in the Section of Electrical Engineering and Laptop or computer Science (EECS) at MIT.

Some presenters have been optimistic about improvements previously underway. In accordance to Ericsson’s research, as significantly as 15 per cent of the carbon emissions globally can be reduced by way of the use of current options, mentioned Mats Pellbäck Scharp, head of sustainability at Ericsson. For example, GPUs are extra productive than CPUs for AI, and the progression from 3G to 5G networks boosts vitality discounts.

“5G is the most strength economical standard at any time,” said Scharp. “We can develop 5G devoid of expanding power use.”

Firms these types of as Google are optimizing energy use at their facts facilities through improved design, technology, and renewable energy. “Five of our knowledge facilities all over the world are running near or over 90 {18fa003f91e59da06650ea58ab756635467abbb80a253ef708fe12b10efb8add} carbon-free of charge electricity,” stated Jeff Dean, Google’s senior fellow and senior vice president of Google Research.

But, pointing to the doable slowdown in the doubling of transistors in an built-in circuit — or Moore’s Regulation — “We will need new ways to satisfy this compute demand from customers,” claimed Sam Naffziger, AMD senior vice president, corporate fellow, and product or service technologies architect. Naffziger spoke of addressing functionality “overkill.” For case in point, “we’re finding in the gaming and machine understanding place we can make use of lower-precision math to provide an impression that appears to be just as good with 16-bit computations as with 32-little bit computations, and instead of legacy 32b math to teach AI networks, we can use lower-electrical power 8b or 16b computations.”

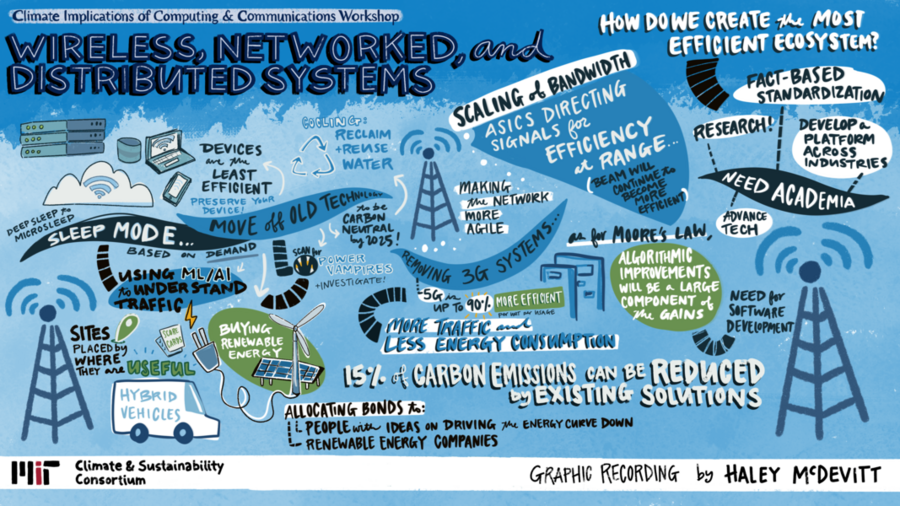

Visible representation of the discussion throughout the workshop session entitled “Wi-fi, networked, and distributed techniques.”

Image: Haley McDevitt

Other presenters singled out compute at the edge as a key vitality hog.

“We also have to adjust the devices that are set in our customers’ palms,” explained Heidi Hemmer, senior vice president of engineering at Verizon. As we consider about how we use electricity, it is popular to jump to data facilities — but it genuinely starts off at the product by itself, and the vitality that the devices use. Then, we can consider about house web routers, distributed networks, the information facilities, and the hubs. “The equipment are essentially the least vitality-effective out of that,” concluded Hemmer.

Some presenters had diverse views. Various called for establishing dedicated silicon chipsets for effectiveness. Even so, panel moderator Muriel Medard, the Cecil H. Inexperienced Professor in EECS, described investigate at MIT, Boston College, and Maynooth University on the GRAND (Guessing Random Additive Sound Decoding) chip, expressing, “rather than possessing obsolescence of chips as the new codes occur in and in diverse expectations, you can use 1 chip for all codes.”

Whichever the chip or new algorithm, Helen Greiner, CEO of Tertill (a weeding robot) and co-founder of iRobot, emphasized that to get items to sector, “We have to find out to go absent from seeking to get the absolute most current and biggest, the most superior processor that usually is extra highly-priced.” She extra, “I like to say robotic demos are a dime a dozen, but robotic merchandise are pretty infrequent.”

Greiner emphasised buyers can play a role in pushing for extra energy-efficient solutions — just as drivers commenced to demand electrical cars.

Dean also sees an environmental part for the finish user.

“We have enabled our cloud consumers to select which cloud location they want to run their computation in, and they can make a decision how critical it is that they have a very low carbon footprint,” he mentioned, also citing other interfaces that could allow for customers to come to a decision which air flights are much more productive or what affect putting in a solar panel on their house would have.

Nevertheless, Scharp said, “Prolonging the daily life of your smartphone or pill is actually the finest local climate motion you can do if you want to cut down your digital carbon footprint.”

Facing expanding needs

Even with their optimism, the presenters acknowledged the entire world faces rising compute demand from customers from equipment finding out, AI, gaming, and primarily, blockchain. Panel moderator Vivienne Sze, affiliate professor in EECS, mentioned the conundrum.

“We can do a excellent occupation in building computing and communication definitely economical. But there is this tendency that once factors are incredibly effective, individuals use far more of it, and this may well final result in an general raise in the use of these technologies, which will then raise our total carbon footprint,” Sze stated.

Presenters observed wonderful likely in academic/business partnerships, specially from investigate efforts on the academic aspect. “By combining these two forces alongside one another, you can seriously amplify the effect,” concluded Gousev.

Presenters at the Climate Implications of Computing and Communications workshop also incorporated: Joel Emer, professor of the practice in EECS at MIT David Perreault, the Joseph F. and Nancy P. Keithley Professor of EECS at MIT Jesús del Alamo, MIT Donner Professor and professor of electrical engineering in EECS at MIT Heike Riel, IBM Fellow and head science and engineering at IBM and Takashi Ando, principal research workers member at IBM Analysis. The recorded workshop sessions are obtainable on YouTube.